Subscribe to the newsletter on LinkedIn and receive new editions in your feed (and bonus content!)

Big Story

Observability as an API Governance Requirement

API monitoring based on latency and error rates does not capture how agents use APIs across multi-step workflows.

Without traceability across steps, issues such as prompt injection, incorrect tool usage, or excessive API calls are difficult to detect.

Observability requires tracking tool calls, credential usage, and data retrieval across execution paths.

Runtime visibility is required to enforce access control beyond the initial authentication step.

API calls generated by agents follow multi-step execution paths rather than single request-response patterns. A single task can involve multiple API calls, data retrieval steps, intermediate processing, and tool invocations before producing an output. These steps may span multiple services and, in some cases, multiple agents interacting with each other. Each step can succeed individually, returning valid responses, while the overall outcome is incorrect, incomplete, or based on unintended inputs.

Standard monitoring systems are designed to detect failures such as timeouts, high latency, or error responses. In agent-driven workflows, those signals may not appear even when the system is producing incorrect results. Logs at the gateway level show successful API calls, expected response times, and no visible errors. They do not capture how decisions were made between calls, how data was selected, or how outputs were constructed from multiple steps.

A common failure pattern involves context retrieval and tool invocation. An agent may retrieve external or internal data, pass it into a sequence of API calls, and generate an output that appears structurally valid. If the retrieved data is incorrect, manipulated, or incomplete, the resulting output can still pass validation checks. In such cases, the failure does not appear as an error condition but as a logical inconsistency that is only visible when tracing the full sequence of actions taken by the system.

Microsoft’s guidance on observability for AI systems emphasizes the need to track execution at the task or conversation level rather than at the level of individual API requests. This includes assigning identifiers to each task, recording all tool invocations, capturing the source of retrieved data, and linking these elements across multiple steps and agents. Without this level of traceability, it is difficult to reconstruct how a specific output was produced or to identify where incorrect inputs entered the system.

For API and platform teams, this introduces new instrumentation requirements. Observability must extend into the runtime layer where agents determine which APIs to call, what parameters to use, and how intermediate results are combined. This includes tracking tool usage patterns, monitoring credential usage across services, and recording how data flows between steps. Efforts such as OpenTelemetry semantic conventions for AI systems are beginning to define how this telemetry can be standardized, including representations for agent actions, tool calls, and retrieval steps.

Access control enforced at the gateway remains necessary, but it does not provide visibility into how permissions are exercised after access is granted. When agents operate continuously and chain multiple API calls, governance depends on understanding how credentials are used throughout execution. Observability at the runtime level provides the data required to evaluate whether actions align with defined policies and expected behavior.

API Feed

Know the Latest from the World of APIs

Apigee released version 1-17-0-apigee-5 on March 17, moving Risk Assessment v2 monitoring to general availability. Teams can map gateways to security profiles, track security scores through Cloud Monitoring dashboards, and configure threshold-based alerts. The release also adds UI support for managing environment-scoped Key Value Map entries, reducing reliance on manual configuration workflows.

LangChain and NVIDIA announced a joint platform for building and operating agent-based systems, combining LangChain’s development frameworks and LangSmith tracing with NVIDIA’s inference infrastructure, guardrails, and runtime tooling. The integration focuses on linking application-level traces with infrastructure-level telemetry, allowing teams to track agent execution across both software and compute layers.

Microsoft moved its Foundry Agent Service and observability layer to general availability, adding end-to-end tracing across multi-agent workflows and continuous evaluation of production traffic. The system captures how agents interact, what tools are invoked, and how outputs are generated across steps, with alerts integrated into Azure Monitor.

Community Spotlight

Lorinda Brandon: Developer Experience and API Adoption

Lorinda Brandon has worked across API tooling, developer experience, and API program design, with a focus on how developers discover, evaluate, and integrate APIs in production environments. She has been associated with SmartBear, where she contributed to API tooling used for design, testing, and lifecycle management. She’s also been associated with OpenAPI for more than 8 years. Her work focuses on how APIs are used in practice, including how developers interpret documentation, test endpoints, and integrate APIs into existing systems.

Her writing and talks examine the steps developers follow when working with APIs, starting from discovery through to integration and maintenance. This includes how documentation is structured, how quickly developers can understand available endpoints, and how effectively they can validate API behavior. Inconsistent documentation, unclear request and response formats, or missing examples increase integration time and introduce errors during implementation. These issues also affect how frequently developers need to rely on support channels or internal teams to resolve basic usage problems.

A key area of her work is how API governance is applied within organizations. Teams often define standards for API design, versioning, authentication, and error handling, but these standards are only effective when they are embedded into development workflows. This includes providing templates, sample implementations, and documentation that reflects actual usage patterns. When governance is enforced only through review processes without supporting tools or guidance, teams may implement workarounds or create variations that lead to inconsistencies across services.

Her work also highlights how feedback from API consumers is incorporated into design improvements. This includes identifying where developers face repeated issues, how APIs are being used differently from initial expectations, and where design adjustments are required to support real usage patterns. These feedback loops help reduce integration friction over time and improve consistency across API surfaces.

These considerations extend to automated systems where APIs are consumed programmatically. APIs with consistent schemas, predictable error handling, and clearly defined contracts are easier to integrate into workflows that involve automation or orchestration. When APIs vary across endpoints or lack a clear structure, additional validation logic is required, increasing complexity and the likelihood of runtime failures.

Brandon’s work focuses on how API design and developer experience affect adoption, integration effort, and long-term maintenance across both human and automated consumers.

Resources & Events

📅 apidays Singapore (Marina Bay Sands, Singapore - April 14-15, 2026)

apidays Singapore brings together API builders, architects, and platform leaders in one of Asiaʼs biggest fintech and digital transformation hubs, with a strong focus on how APIs are evolving for the AI and agentic era. The program blends practical case studies and technical sessions across API management, security, governance, and automation. Details →

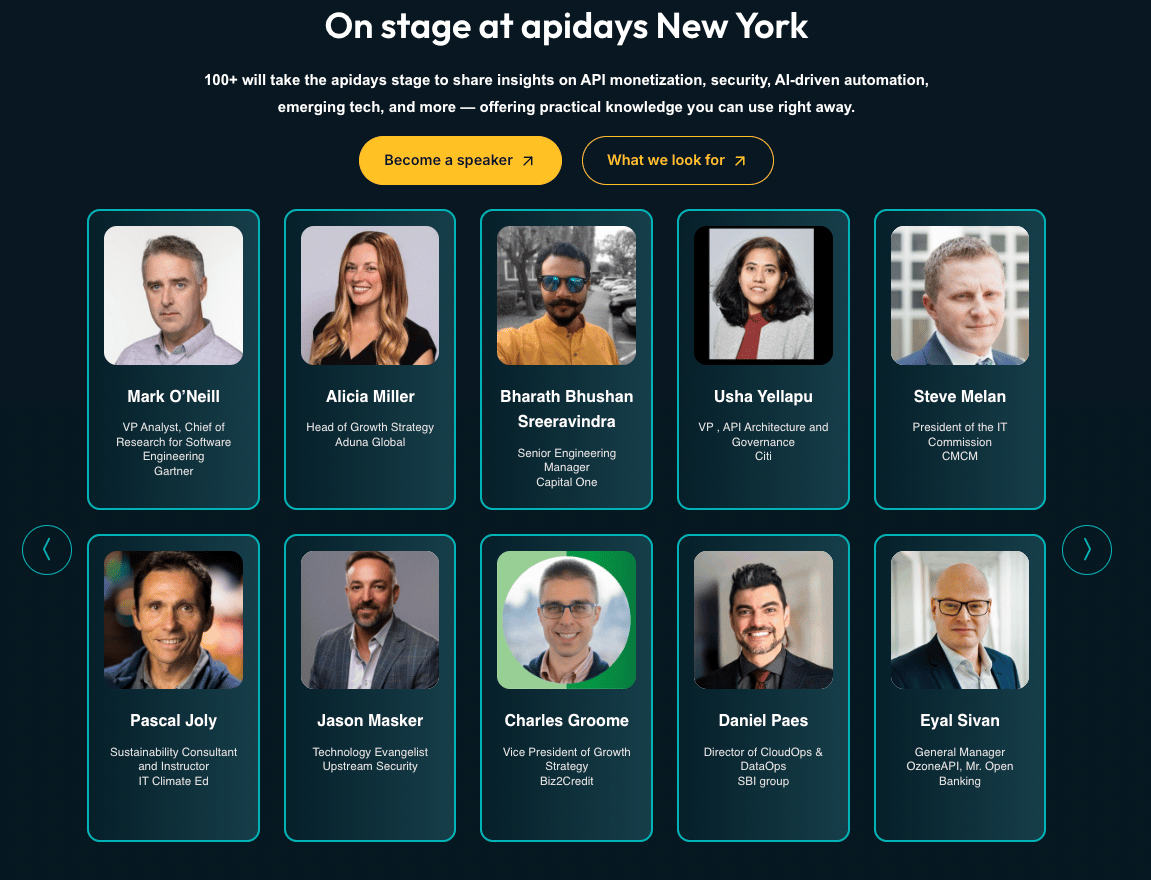

📅 apidays New York (Convene 360 Madison, New York - May 13-14, 2026)

apidays New York is positioned as a high-density gathering for teams operating APIs at scale, with sessions spanning monetization, security, AI-driven automation, and platform governance. Itʼs built for senior practitioners and decision-makers, bringing together 1,500+ participants from 1,000+ companies, making it a strong anchor event for anyone tracking where enterprise API strategy is heading next. Details →

You can find a list of all Apidays events here

Apply to speak at Apidays Singapore, NY, London, Paris, and more here

📅 QCon San Francisco 2026 (Hyatt Regency, San Francisco - November 16-20, 2026)

QCon San Francisco focuses on software architecture and system design, with tracks covering distributed systems, platform engineering, API design, and production system reliability. The program is curated around practitioner-led case studies, with sessions delivered by engineers and architects working on large-scale systems across cloud, AI, and infrastructure domains. Details →

📊 Report Spotlight: API Security Global Market Report 2026 (The Business Research Company)

The Business Research Company’s March 2026 report estimates the API security market at $1.35 billion in 2025, growing to $1.8 billion in 2026, with continued expansion driven by increasing API traffic and broader adoption of automated systems. The report covers capabilities including API discovery, runtime threat detection, testing, and access control, with North America leading adoption and Asia-Pacific showing the fastest growth. The data highlights how API security investment is scaling alongside usage volume and system complexity. Read →

Insight of the Week

Multi-step Workflows in API Interactions

Recent updates to the OpenAI Responses API show a shift in how API interactions are structured in AI systems. Instead of single request-response calls, applications now execute multi-step workflows where models propose actions, invoke tools, and iterate through execution loops inside a managed runtime. These workflows can involve parallel tool calls, intermediate outputs, and repeated API interactions before producing a final result. This changes how API usage needs to be tracked and controlled. API calls are no longer isolated events. They are part of longer execution chains that include retries, context updates, and tool orchestration. As these workflows expand, visibility into how calls are generated and combined becomes necessary to manage cost, performance, and correctness across systems.

For the Commute

Designing Better APIs in the Age of AI (apidays)

This Apidays session examines practical challenges in API development, particularly the lack of structured API specifications and their impact on integration, testing, and security. While API traffic dominates modern systems, many APIs are still deployed without formal contracts, making it difficult to validate behavior and increasing the risk of errors and unintended data exposure when used by automated systems. The discussion also covers development workflows, including how teams can use API specifications to generate mocks, define multi-step test flows, and reduce dependency on full local environments.

That’s it for this week.

Stay tuned for bold ideas, fresh perspectives, and the next wave of API innovation

-The Apidays Team